Documentation Index

Fetch the complete documentation index at: https://docs.pipeshub.com/llms.txt

Use this file to discover all available pages before exploring further.

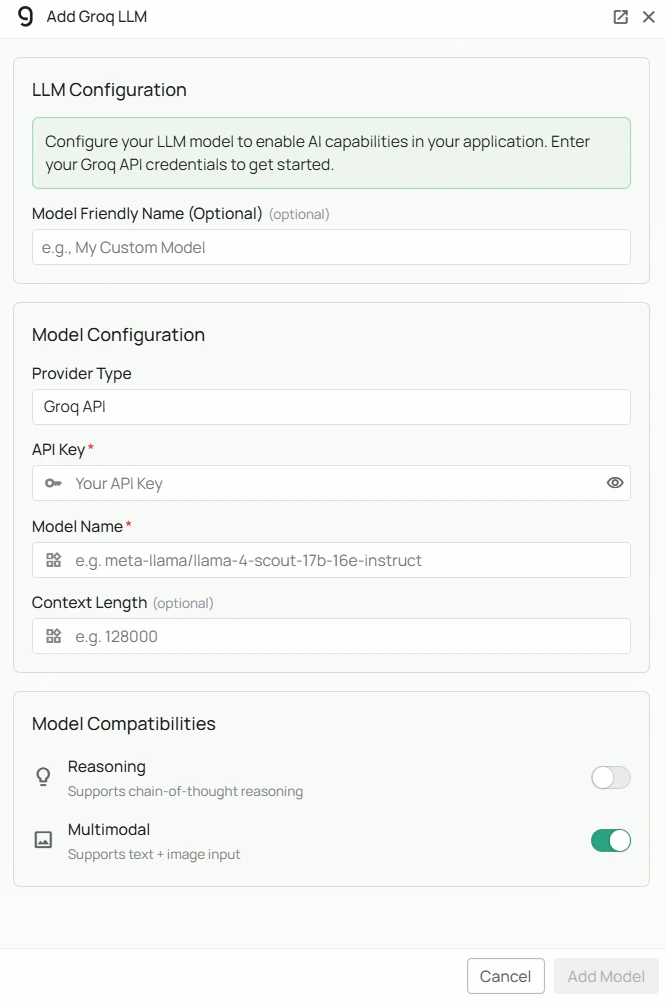

Groq Configuration

Required Fields

API Key *

The API Key is required to authenticate your requests to Groq’s services. How to obtain an API Key:- Log in to the Groq Console

- Navigate to the “API Keys” section

- Click “Create API Key”

- Copy the generated API key

Model Name *

The Model Name field defines which model you want to run on Groq’s infrastructure. Popular models available on Groq:meta-llama/llama-4-scout-17b-16e-instruct- Meta’s Llama 4 Scout, a fast and capable instruction-following model

- For fast, general-purpose instruction-following, select

meta-llama/llama-4-scout-17b-16e-instruct - Check Groq’s model documentation for the full list of supported models

Configuration Steps

As shown in the image above:- Click Configure on the Groq provider card

- Enter your Groq API Key in the designated field (marked with *)

- Specify your desired Model Name (marked with *)

- Click Add Model to save and validate your credentials

Both the API Key and Model Name are required fields to successfully configure Groq integration.

Usage Considerations

- API usage will count against your Groq account’s quota and billing

- Different models have different pricing — check Groq’s pricing page for details

- Groq provides:

- Ultra-low latency inference via dedicated LPU hardware

- High throughput for concurrent requests

- Support for popular open-source models including Llama and Mixtral families

Troubleshooting

- If you encounter authentication errors, verify your API key is correct and has not expired

- Ensure your Groq account has billing set up if you are on a paid tier

- Check that the model name is spelled correctly — Groq uses a

provider/model-nameformat for most models - Verify that your account has access to the specific model you have selected

- If you are experiencing rate limits, check your usage in the Groq Console dashboard